Abstract

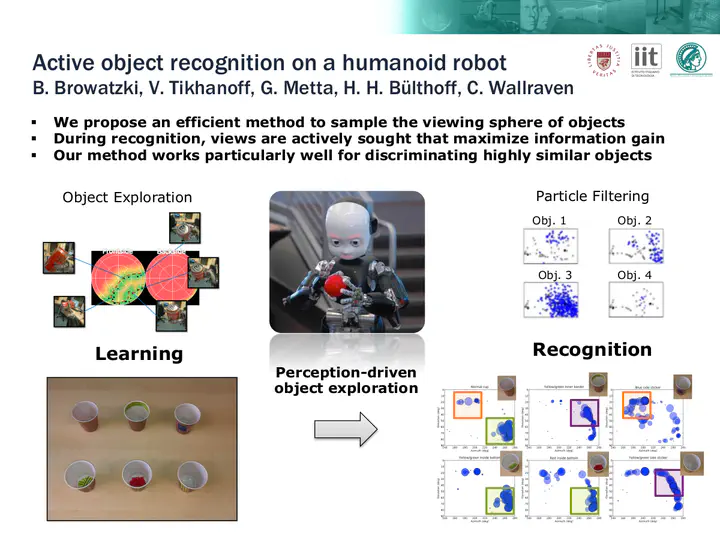

Interaction with its environment is a key requisite for a humanoid robot. Especially the ability to recognize and manipulate unknown objects is crucial to successfully work in natural environments. Visual object recognition, however, still remains a challenging problem, as three-dimensional objects often give rise to ambiguous, two-dimensional views. Here, we propose a perception-driven, multisensory exploration and recognition scheme to actively resolve ambiguities that emerge at certain viewpoints. We define an efficient method to acquire two-dimensional views in an object-centered task space and sample characteristic views on a view sphere. Information is accumulated during the recognition process and used to select actions expected to be most beneficial in discriminating similar objects. Besides visual information we take into account proprioceptive information to create more reliable hypotheses. Simulation and real-world results clearly demonstrate the efficiency of active, multisensory exploration over passive, visiononly recognition methods.

Nominated for Best Vision Paper award.